The Complete Playbook · 103 Experiments

The growth experiment library

for B2B teams

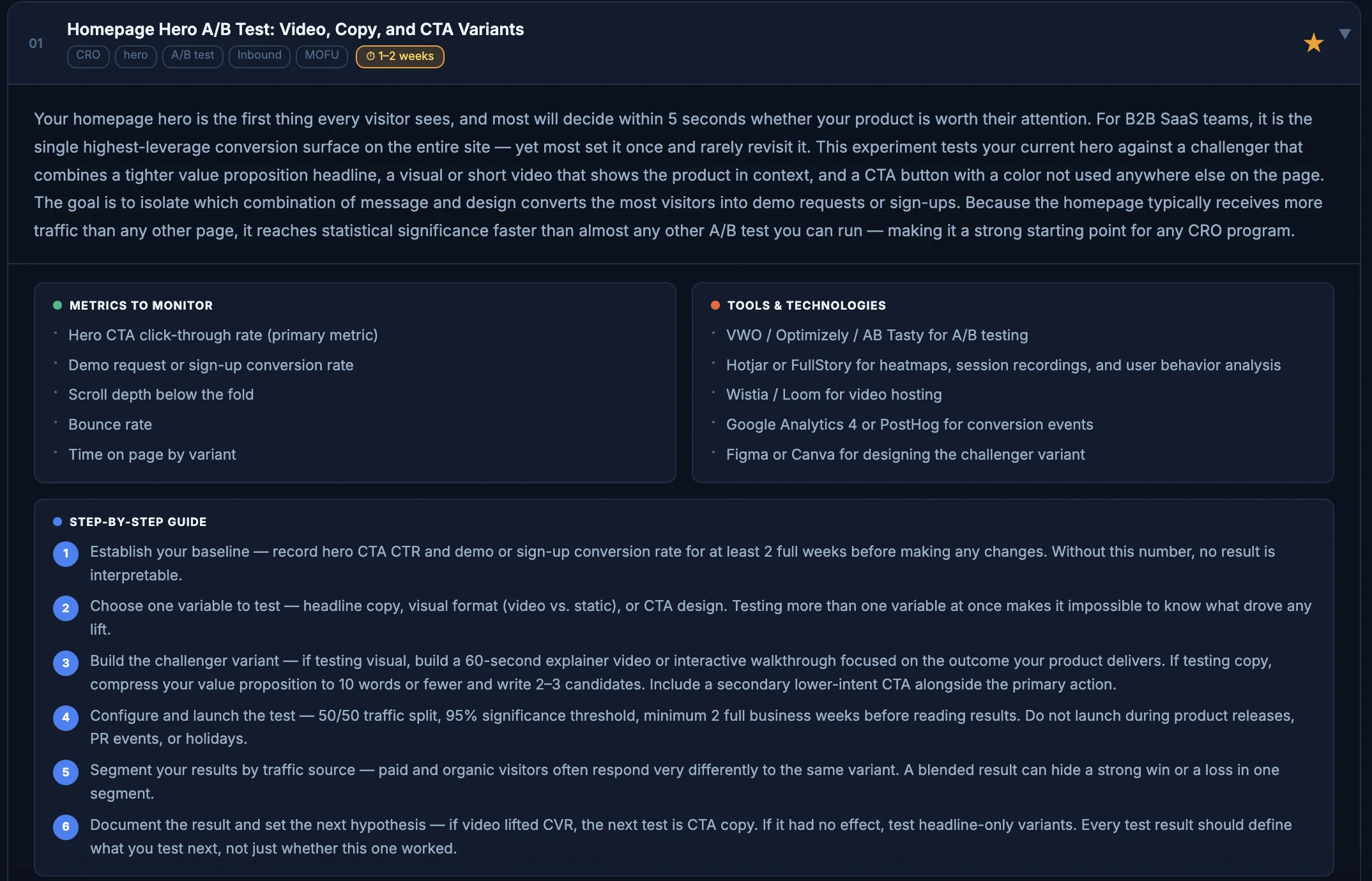

A tactical, category-by-category guide to running experiments across every growth surface — from your website to paid ads, email, events, content, and beyond.

103

Experiments

8

Categories

7

Attributes each